AI Privacy Crisis: When Business Secrets Go Public

Thousands of private ChatGPT conversations appear indexed on Google. Anthropic radically changes its privacy policy. September 2025: the month redefining trust in artificial intelligence.

The Double Blow Shaking the Business World

This week we have witnessed two simultaneous crises that are redefining how companies must think about artificial intelligence. This is not just a technical problem: it is an existential crisis for any organization using AI in its daily operations.

FRONT 1: The ChatGPT Scandal – Thousands of Conversations Exposed

The scale is alarming: In August 2025, thousands of private ChatGPT conversations appeared publicly indexed on Google, Bing, and other search engines. The culprit was not a hack, but a discontinued feature from OpenAI called "make chats discoverable."

How exactly did it happen? This feature, now removed, turned private conversations into public web pages that search engines could automatically index. What users believed was completely private became publicly accessible with a simple search.

Real cases exposing the risk:

- Users sharing complete CVs with sensitive personal information

- Mental health conversations where people seek psychological help

- Confidential legal queries treating ChatGPT as a lawyer

- Sensitive business information discussed without knowing the risks

- Personal medical data from patients consulting about symptoms

Current status: Although Google has begun limiting the visibility of these conversations, many remain available on other search engines and the reputational damage is already done.

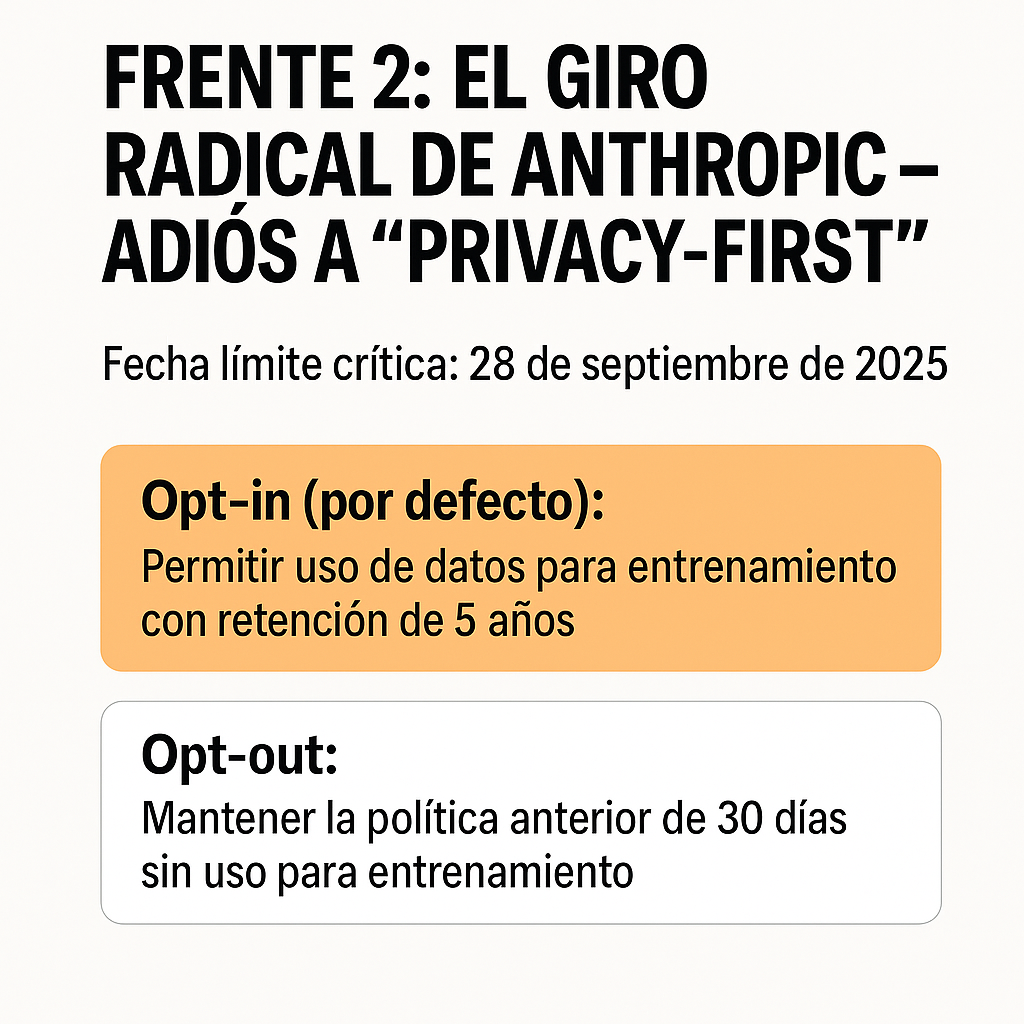

FRONT 2: Anthropic's Radical Shift – Goodbye to "Privacy-First"

The most impactful change of the year: Anthropic, which positioned itself as the "privacy-first" alternative to ChatGPT, has just completely reversed its data policy.

The fundamental change:

- Before: Claude did NOT use user data to train models (30-day retention)

- Now: By default, it WILL use your conversations for training (retention up to 5 years)

Critical deadline: September 28, 2025

After this date, all consumer users must actively choose:

- Opt-in (default): Allow data use for training with 5-year retention

- Opt-out: Maintain the previous 30-day policy without use for training

Important note: This change does NOT affect Claude for Work, the Enterprise API, or Enterprise plans – only individual users.

The Business Implications Are Immediate

Legal and Compliance Risks

- Violations of confidentiality agreements with clients and partners

- Exposure of privileged information that could result in lawsuits

- Non-compliance with regulations like GDPR, HIPAA, or sector-specific rules

- Corporate liability for third-party data leaks

Impact on Reputation

- Loss of trust from clients and investors

- Compromised competitive advantage due to exposed strategies

- Communication crisis difficult to manage

- Long-term damage to brand perception

Immediate Operational Costs

- Emergency audits of all AI usage

- Legal review of potentially exposed conversations

- Urgent implementation of new security policies

- Emergency training for all employees

Immediate Action Guide for Companies

URGENT ACTION – Next 48 Hours

1. Emergency Audit

- ☐ Identify who in your company uses ChatGPT and Claude

- ☐ Check if anyone has used ChatGPT's "share" feature

- ☐ Search Google:

site:chat.openai.com "your company"or related terms - ☐ Document any exposure found

2. Immediate Claude Configuration

- ☐ Access privacy settings before September 28

- ☐ Opt-out of data use for training

- ☐ Verify the setting has been applied correctly

- ☐ Communicate the change to all corporate users

3. Emergency Policy

- ☐ Temporary ban on sharing ChatGPT links

- ☐ Mandatory review before using AI with sensitive information

- ☐ Secure channels for confidential discussions

MEDIUM-TERM STRATEGY – Next 2 Weeks

1. Corporate AI Policy

NEVER use public AI for:

- Confidential financial information

- Customer or patient data

- Non-public business strategies

- Privileged legal information

- Employee personal data

- Information under confidentiality agreements

2. Secure Alternatives

- Claude for Work or ChatGPT Enterprise (different privacy policies)

- On-premise solutions for critical information

- Compartmentalization of information by sensitivity level

3. Training and Awareness

- Emergency sessions on AI privacy

- Real cases of what NOT to do

- Clear protocols for different information types

The Current Landscape: Beyond ChatGPT and Claude

Other Recent Cases

- Grok (X/Twitter): More than 300,000 private conversations also appeared indexed on Google

- Common pattern: "Share" features on AI platforms are creating massive exposures

- Worrying trend: Users treat AI as therapist, lawyer, and confidant without understanding the risks

Why Is This Happening?

- Poorly designed features: "Share" options were not truly private

- Lack of education: Users don't understand privacy implications

- Competitive pressure: AI companies prioritize features over privacy

- Data monetization: Training with user data is economically valuable

The Future of AI Privacy: What's Coming

Inevitable Trends

- More companies will follow Anthropic's model: Training with user data will become the norm

- Stricter regulation: Europe is already preparing specific AI regulations

- Market segmentation: "Enterprise" vs. "consumer" versions with radically different policies

- Forced transparency: Companies will have to be clearer about data use

Predictions for 2026

- Mandatory audits of AI use in regulated sectors

- Privacy certifications as a requirement for business contracts

- Specific insurance for AI risks and data leaks

- New roles: Chief AI Privacy Officer will be common in large companies

Changes in User Behavior

- Greater awareness of the risks of sharing information with AI

- Growing demand for truly private AI solutions

- Usage segmentation: Public AI for general tasks, private solutions for sensitive information

Lessons Learned and Final Recommendations

For Companies

- Never assume "private" means truly private on free AI platforms

- Invest in enterprise solutions if you handle sensitive information

- Train your team on the real risks of AI

- Develop clear policies before an incident occurs

For Individual Users

- Treat every AI conversation as potentially public

- Read privacy policies and understand changes

- Use opt-out functions when available

- Consider private alternatives for sensitive information

Conclusion: The Time to Act is NOW

This is not a future crisis: it is happening in real time. The ChatGPT cases and Anthropic's change are just the tip of the iceberg of a fundamental transformation in how the AI industry handles privacy.

Companies that act fast will gain a competitive advantage and avoid reputational crises. Those that ignore these warning signs will pay the price in the form of data exposure, legal problems, and loss of trust.

The question is not if your company will be affected by these changes. The question is: will you be prepared when your confidential information appears in a Google search?

The time to act is now. The cost of inaction may be irreversible.

Reference Resources and Links: