Why Your Company Needs AI Governance (Before It’s Too Late)

“It only applies to companies that create AI, not to us who just use it.”

This is probably the most dangerous belief regarding the EU AI Act. And it is completely false.

To be clear, the AI Act distinguishes between two roles with very different obligations. On one hand, there are Providers (those who create the AI, such as OpenAI or Google), and on the other, Deployers (any company that uses AI in its professional day-to-day). If your team uses ChatGPT for customer service, Copilot for programming, or any AI tool to make business decisions, you are a deployer. And yes, that means you have legal obligations.

Now, the important part that no one tells you: do not panic about the dates. The AI Act has a phased implementation where the level of obligation depends entirely on the risk of your use case. In February 2025, only prohibited practices came into force (things like social scoring, subliminal manipulation, or emotional recognition in workplaces and schools). Using ChatGPT to write emails or reports does NOT fall under this. Full obligations for high-risk systems (HR, credit, decisions affecting fundamental rights) do not arrive until August 2026. In between, there are only basic transparency requirements for chatbots and AI-generated content.

The reality is that most corporate uses of AI fall under “minimal risk” or “transparency.” But here is the real problem: many companies do not know how to classify their use cases. They use AI to filter candidates believing it is “low risk,” when in reality they are managing a high-risk system with strict obligations that come into force in 2026.

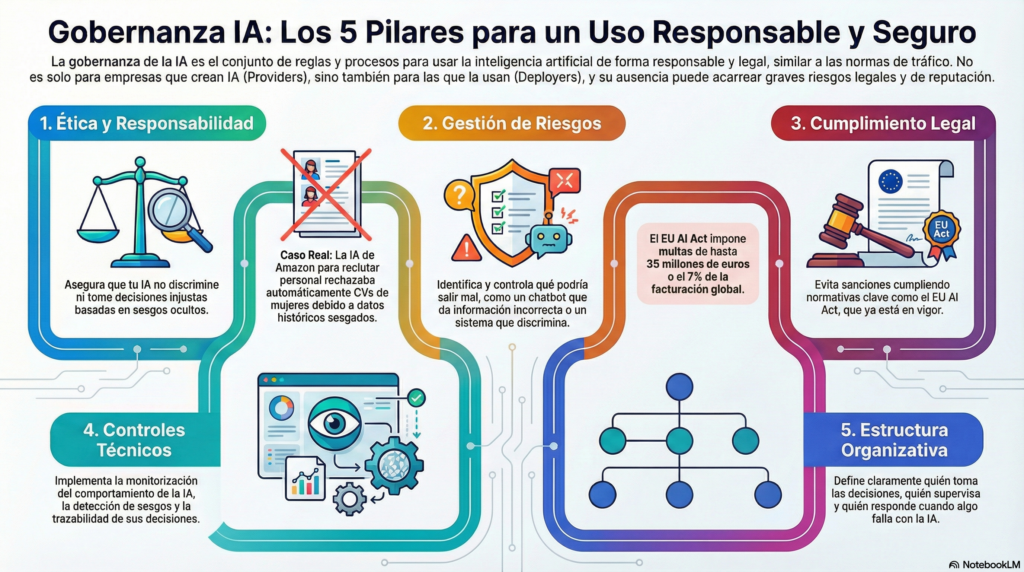

What AI Governance Really Is

If you do not have clear rules on how to use AI in your company (that is governance), your system will end up discriminating against candidates without you realizing it, or your chatbot will provide incorrect information, and you will pay the fine. It is as simple as that.

Think of it like traffic rules. You can drive without knowing them, but when you are fined (or cause an accident), no one will accept “I didn’t know” as an excuse. AI governance is basically a set of policies, processes, and controls that ensure you use artificial intelligence responsibly, legally, and in a way that doesn’t blow up in your face.

This covers five main areas. First is ethics and responsibility, which is more important than it seems. Does your AI discriminate without you noticing? It happens more than you think. Amazon developed an AI system for recruitment that rejected women’s CVs because it was trained on biased historical data (mostly male). They had to eliminate it completely. Then there is risk management, where you ask yourself things like what happens if your chatbot gives incorrect medical information, or if your pricing system discriminates by zip code without your knowledge.

Legal compliance is obvious but brutal. The EU AI Act establishes fines of up to 35 million euros or 7% of your global turnover. And yes, it is already in force. You also need technical controls (behavior monitoring, bias detection, decision traceability) and a clear organizational structure defining who decides what, who supervises, and above all, who is accountable when something fails.

The Problem No One Sees Coming

Most companies believe that AI is an “IT issue” or “for developers.” A huge mistake.

The real problem is that your employees are already using AI every day. They call it “Shadow AI”, and according to Gartner, 40% of executives report an increase in its use. This means marketing is using ChatGPT to generate client content without checking if the information is real, HR is using CV screening tools that discriminate by age (without knowing it), sales is using AI for lead scoring that probably violates GDPR, and support is using chatbots that may be leaking confidential information.

None of these teams know they are “deployers” under the AI Act. None have evaluated if their use is high-risk. None document automated decisions when they should. And here comes the interesting part: they could all be exposing the company to million-euro fines and lawsuits if they do not correctly classify and manage their use cases before 2026.

Why This Affects You Even if You Don’t Sell AI

Here is what many people do not understand about the EU AI Act: it does not matter if your company is in Spain, Mexico, or Argentina. If you sell to European customers or process data from Europeans, it applies to you. Just as happened with GDPR. It also doesn’t matter if you “only use” AI and do not create it. The AI Act defines a “deployer” as any company that uses AI in a professional context.

That includes you.

The obligations already have a defined schedule, but they are not the same for everyone. In February 2025, prohibitions on unacceptable risk practices (social scoring, manipulation) came into effect. In August 2025, obligations for general-purpose AI models arrive. The big deadline is August 2026, when full compliance for high-risk systems (HR, credit, health, education) kicks in. And legacy systems that have not been substantially modified have until August 2027.

The key lies in knowing how to classify your use cases. Using ChatGPT to draft emails has minimal requirements (basically none). Using it to decide whom to hire or fire is high-risk and requires full compliance by 2026.

Be careful with this: “But we only use ChatGPT for emails and summaries,” you might say. Perfect, that is minimal risk and has basic or no requirements. No drama there. But what if someone in your company starts using it to filter candidates, evaluate performance, decide on credits or financing, perform customer scoring for essential services, or make automated decisions that affect fundamental rights? It automatically becomes a high-risk system.

By August 2026, you will need a fundamental rights impact assessment, documented human oversight, continuous monitoring, logs for at least 6 months, and strict GDPR compliance. The problem is not using AI. The problem is not knowing what level of risk you have.

How to Start (Without Going Crazy in the Process)

AI governance sounds intimidating, but starting is simpler than you think. The ideal way is to begin by making an inventory of all the AI tools your company uses. And when I say all, I mean obvious subscriptions like ChatGPT or Copilot, but also department-specific tools (HR, sales, marketing), integrated systems (your CRM probably has AI), and above all “Shadow AI” (ask your teams what they use even if it’s not officially approved, because you’ll be surprised).

Once you have the list, it’s time to classify the basic risk. For each tool, ask yourself if it makes decisions about people (hiring, evaluation, credit), if it affects fundamental rights, if it generates public content, or if it processes personal data. There are free tools to help you classify the risk, so you don’t have to be a specialized lawyer to start.

Next, you need to document a basic usage policy. We are not talking about a 200-page manual (honestly, no one will read it). A well-crafted single page that clarifies who can use which tools, which uses are expressly prohibited (classic example: never upload customer data to free ChatGPT), the obligation to review outputs before using them in important decisions, and whom to report to if something goes wrong.

And here comes something important: basic AI training will be mandatory for high-risk systems. You don’t need your people to do a Master’s in machine learning. You need them to know what AI is and what it is NOT (surprisingly, many people are not clear on this), its main limitations (hallucinations, biases), the basic legal obligations according to the use they give it, and when they should ask for help instead of improvising. Even if it is not mandatory for all cases until 2026, starting now gives you a competitive advantage.

When Lack of Governance Costs You Dearly

Air Canada’s chatbot promised a discount that did not exist. The customer sued. Air Canada argued in court that “the chatbot is a separate entity, it does not represent us.” The court basically told them: “You are responsible for the information your AI provides.” Air Canada had to pay.

Closer to home, the Spanish Data Protection Agency fined La Liga €250,000 and Osasuna €40,000 for using biometric systems without an adequate legal basis. And Amazon, which we might think knows what it’s doing with technology, developed an AI for CV screening that discriminated against women because it was trained on mostly male historical CVs. They had to eliminate it completely.

The lesson? It’s not just about “using AI.” It’s about knowing WHAT your AI does and HOW it does it.

The Myths That Are Costing You Money

“It only applies to large companies”. False. The AI Act applies to any company using AI in a professional context in the EU. It doesn’t matter if you have 5 or 5,000 employees. Fines do scale with your size (up to 7% of global turnover), but classification and basic compliance obligations apply to everyone.

“If I don’t sell in Europe, it doesn’t affect me”. If you process data from Europeans (customers, employees, partners), it applies to you. Just like with GDPR, which caught many American and Latin American companies off guard. Spain is also leading the application with AESIA, the first European AI supervisory agency, so this is serious.

“ChatGPT has its own policies, I am not responsible”. You are responsible as a “deployer.” If one of your employees uses ChatGPT for business decisions and causes harm, you cannot simply say “it’s OpenAI’s fault.” OpenAI is the “provider” with its obligations; you are the “deployer” with yours. They are different roles with different responsibilities.

“Implementing governance paralyzes innovation”. On the contrary. Companies with clear governance innovate FASTER because they know what they can do without legal risk, they have established processes for testing new things (regulatory sandbox), and above all, they don’t waste months undoing poorly executed implementations when they realize there’s a problem.

30-Day Practical Plan

You don’t need to hire expensive consultants or stop your business. A good strategy is to start with visibility. The first week, send an email to all managers with a simple question: “List all the AI tools your team uses.” Also review subscriptions and expenses looking for AI tools you might have bought months ago and forgotten. Document everything in a simple spreadsheet (no need for a sophisticated system yet).

The second week is for classification. Use the EU AI Act Compliance Checker, which is free. Mark in red any use that is clearly high-risk (HR, credit, automated decisions about people). For red cases, the ideal is to take a temporary pause until you evaluate the necessary compliance (believe me, it’s better to pause for a week now than to deal with a surprise audit later).

The third week is the time to establish basic rules. Document a one-page policy that clarifies what can be done, what cannot be done under any circumstances, and who has the authority to authorize new uses. Define responsible parties by department, because if “everyone is responsible,” no one really is. And communicate this to the entire company, not just the managers.

Dedicate the fourth week to minimal training. A one-hour session for everyone on basic concepts, main risks, and obligations. A longer two-hour session for frequent users with best practices and real cases. And document attendance, because even if it’s not mandatory until 2026, you will be glad to have this documented when the time comes.

After this initial month, next steps include performing impact assessments for high-risk systems, implementing technical controls (logs, monitoring), and establishing a quarterly review of new tools. But you can do that with more calm and clarity.

The Opportunity No One Sees

Here is the secret that few companies understand: well-executed AI governance is NOT a cost. It is a massive competitive advantage.

Your B2B clients are increasingly asking things like “How do you manage AI in your processes?”, “Can you certify that your systems are secure?”, or “How do you guarantee there are no biases in your automated decisions?”. Companies that can respond with solid documentation, clear processes, and official certifications win contracts. Those that respond “uhhh, we use ChatGPT but we don’t know exactly how” lose them (and I’ve seen this happen more times than I’d like to admit).

ISO 42001, the AI management certification, already exists. Companies obtaining it are using it in their marketing materials as a differentiator. In regulated sectors like banking, health, insurance, or government, AI governance is rapidly moving from “nice to have” to “without this, don’t even bother bidding.”

Your Next Step

Do not leave this for “when I have time.” Although full obligations for high-risk systems arrive in 2026, waiting until the last moment is a bad strategy. You need real time to correctly classify your systems. If you discover you have high-risk cases, changes in processes and documentation are not made in two weeks. And your B2B clients are already asking about this in sales conversations.

The competitive advantage belongs to those who get ahead, not those who run desperately in 2026.

If after reading this you think “we need help to implement this correctly,” that is exactly the right insight. AI governance is not a weekend project (though some may try). The ideal is to start this very week with concrete steps: download the AI Act compliance checklist, dedicate two hours to making an inventory of AI systems, classify your use cases by risk level, and if you find something high-risk, design a realistic compliance plan for 2026.

You have until 2026 to be compliant with high-risk systems, but the sooner you start, the less chaos you will face. Need help evaluating your situation? Let’s talk. A diagnostic consultancy can literally save you months of work by correctly classifying your systems and designing a roadmap that makes sense for your specific reality.

Because the question is not “should I implement AI governance?”

The question is: Do I want to lead or be left running behind the regulation in 2026?