Stanford AI Index 2026: AI Accelerates, But Who's in Control?

Stanford AI Index 2026: AI Accelerates, But Who's in Control?

The Paradox of Unchecked Progress

The Stanford AI Index 2026 has just confirmed what many of us suspected: AI is advancing at historic speed, but our ability to measure, manage, and secure it is falling behind. It's no longer a question of whether AI will transform your business, but whether you'll be ready when it does.

The 423-page report published by Stanford's Institute for Human-Centered Artificial Intelligence reveals an industry in full swing: record investment, faster adoption than PCs or the Internet, and technical capabilities that have reached impressive milestones in science and mathematics.

But there's another side to the coin: a growing gap between what models can do and the rigor with which they are evaluated for potential harm.

1. Mass Adoption: The Hidden Risk Behind the Numbers

The data: Generative AI reached 53% population adoption in just three years, surpassing the adoption speed of PCs and the Internet. The value consumers derive from generative AI grew 54% in one year, reaching $172 billion annually in the United States alone.

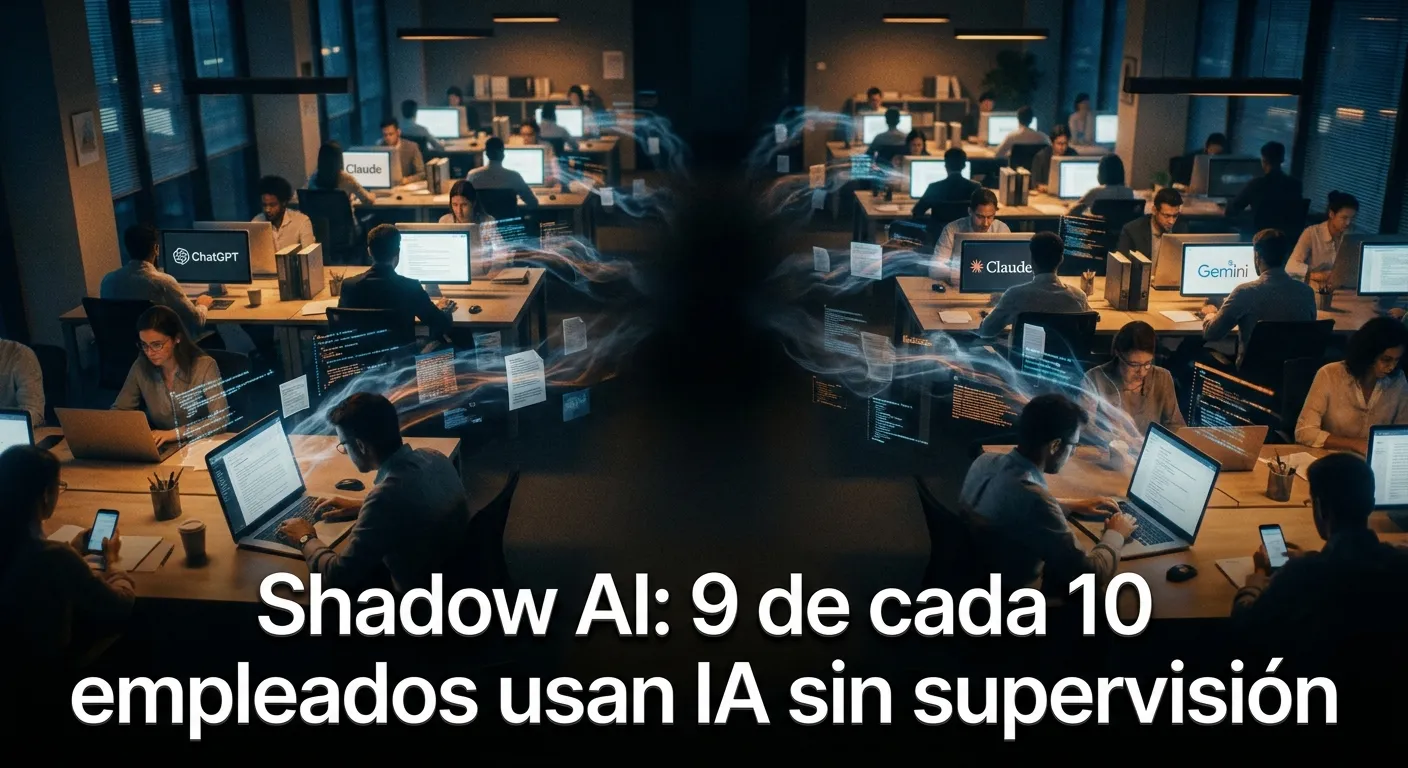

The problem: This explosive adoption hides a dangerous reality. While organizational adoption figures reach 88%, what the report doesn't capture is the "Shadow AI" phenomenon: employees using AI tools without supervision, clear policies, or control over corporate data.

The question you must ask yourself: Is your company using AI or is it being used by it? There's a critical difference between adoption and control.

2. The Reliability Dilemma: When the Most Powerful Models Are the Least Transparent

The critical data: Error rates in benchmarks can reach up to 42% in widely used evaluations like GSM8K. Current models fail to systematically distinguish between knowledge and belief, putting critical processes in medicine, law, and finance at risk.

The opaque reality: Industry produces 90% of frontier models, but these are becoming increasingly less transparent about their training data and code. Of 95 representative models, 80 do not disclose their training code.

For your business: You can't build strategic decisions on algorithmic "black boxes." Transparency isn't an academic luxury; it's an operational necessity.

3. Lagging Security: The Numbers No One Wants to See

The alarming data: Documented AI incidents increased to 362 in 2025, from 233 in 2024—a 55% increase. The six-month moving average reached 326 incidents, with a peak of 435 in a single month.

The critical gap: Almost all frontier model developers report results on capability benchmarks. The same is NOT true for responsible AI benchmarks. The report's safety benchmark table is mostly empty: only Claude Opus 4.5 reports results on more than two responsible AI benchmarks.

The message: The risk isn't future; it's today. Companies that assume "the big labs already handle security" are betting their reputation (and their data) on blind faith.

4. Regulation in Transition: Beyond GDPR

The paradigm shift: Interest in GDPR has dropped slightly (from 65% to 60%), while AI-specific standards like ISO/IEC 42001 (36%) and NIST's risk framework (33%) are rapidly gaining ground.

What it means: Legal compliance in AI is no longer just "checking the GDPR box." Regulators and the market are demanding specific frameworks for the unique risks posed by artificial intelligence.

Your competitive advantage: Companies that get ahead of this regulatory transition won't just avoid fines; they'll gain customer trust and competitive advantage.

5. The US-China Gap Closes: What No One Expected

The surprising data: US and Chinese models have traded leadership positions multiple times since early 2025. The leading US model leads by just 2.7% over the Chinese model as of March 2026.

The geopolitical reality: China now leads in publication volume, citation share, and patent grants. DeepSeek-R1 briefly matched the best US model in February 2025.

For global companies: AI competition is no longer bipolar. Technology strategies must contemplate a multipolar ecosystem where innovation comes from multiple geographies.

6. Public Perception vs. Reality: The Disconnect Grows

The divide: Nearly two-thirds of Americans (64%) expect AI to lead to fewer jobs over the next 20 years, while only 5% expect more. Experts are less pessimistic (39% foresee fewer jobs).

The real labor impact: Entry-level positions have dropped dramatically, with development jobs for the 22-25 age group seeing a 20% cut.

Your responsibility: AI is not neutral. Companies that adopt AI responsibly need to be transparent about its impact on the workforce and prepare retraining programs.

Conclusion: Passenger or Pilot?

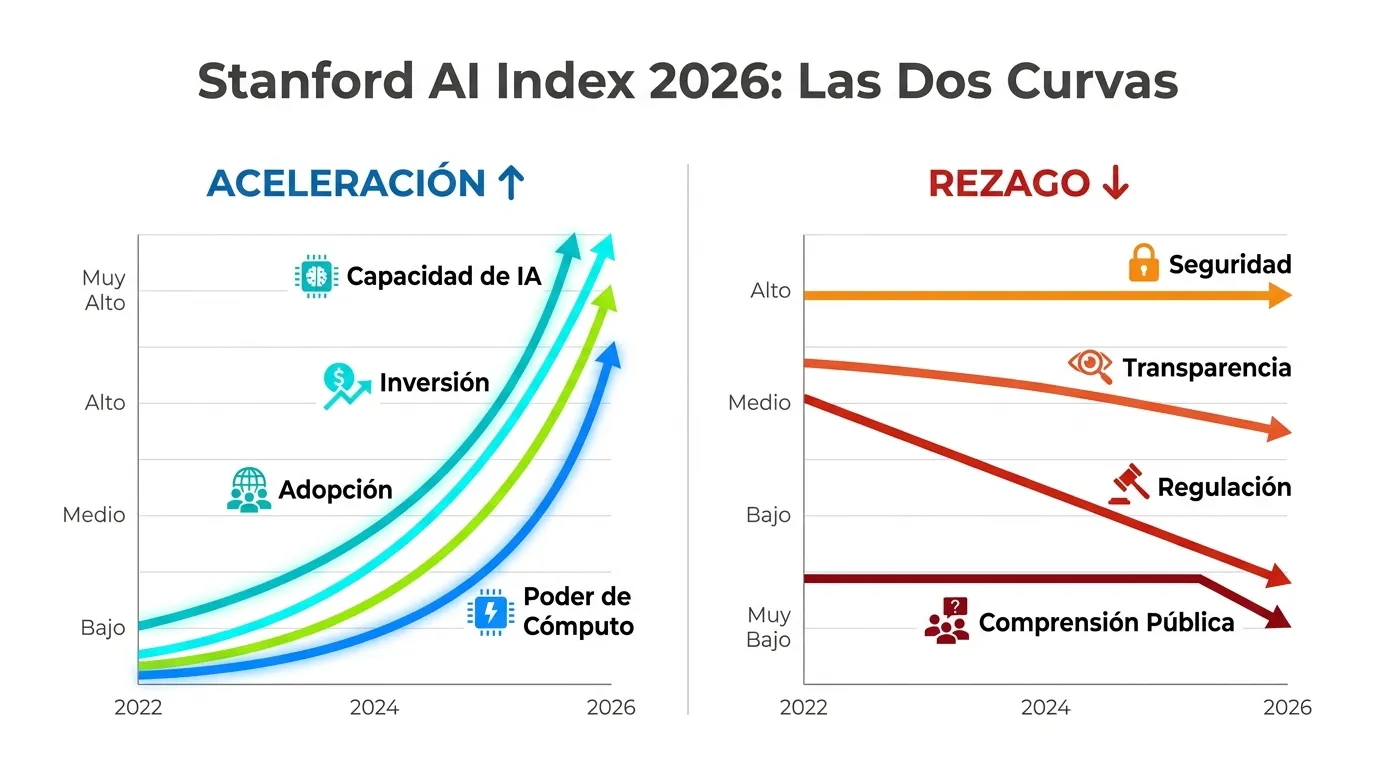

The Stanford AI Index 2026 paints a clear picture: AI is accelerating, and everything else is falling behind.

If we represented the report in a single graph, we would have exponentially ascending curves of model capability, computing power, investment, and adoption. And flat or descending curves in transparency, security, public understanding, and regulatory capacity.

The question is no longer "What will the future bring?" but "Which curve are you on?"

At Montevive.AI, we believe you don't have to choose between innovation and security. Our mission is simple: 100% AI, 99% security. We help companies stay on the ascending curve of capability without falling on the descending curve of control.

Shall we talk about how to take control of your AI before it takes control of you?

Learn More:

- Download the full report (423 pages, PDF)

- 12 key takeaways from the AI Index 2026 (Stanford HAI)

- Technical analysis of the report (Performance and benchmarks)

Need to evaluate Shadow AI risk in your company? Contact us for a no-obligation initial assessment.